Welcome to issue #18 of my newsletter. Keeping it short and sweet this week as I have a lot of super interesting stuff to share. But my little newsletter keeps growing so I’m going to keep the intro section, which you can skip if you’ve already read it. If not, here’s a bit more about me.

Hi, I’m Morgan 👋

I’m the cofounder and CTO of Bold Metrics, we’re an AI company doing some interesting stuff with apparel brands and retailers, helping them unlock the power of body data. I’m not a sales or marketing person so I’ll leave it there, not here to pitch you, but now you know what I do.

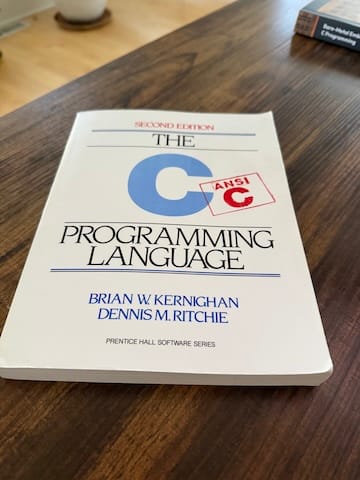

A long time ago, in a galaxy far, far away, I went to Carnegie Mellon and studied Computer Engineering and Computer Science. While I was in school, I got really interested in optimization techniques, specifically processor-level optimization using things like C and Assembly. This was my favorite book, I still have it on my desk, probably always will.

Fast-forward a bit and I fell in love with Python, and today, most of the coding I do is in Python, but I’ve been getting into Rust a bit this year and that’s been pretty darn interesting. That being said, these days I’ll be the first to admit, I’m a mediocre coder at best. My focus, as a founder and CTO is on my team. I lead a truly amazing engineering and data science team, with some of the most brilliant and incredible people on the planet. It is humbling to be able to work with such an amazing team - building and leading teams is my greatest joy in life.

Most of the topics I’ll cover here are going to be about things like optimizing and building interesting AI agents and small LLMs, agentic coding, interesting libraries or optimizations that people can leverage, and probably some robotics stuff here and there because I love robots.

Right now I have no sponsors and no subscription fee. That could change, I guess I am open to both, but haven’t thought too much about it yet, so this is where we are today.

Okay, enough from me - here’s some fun stuff that’s on my radar, and now is on yours.

TurboQuant

If there’s only one thing you read about this week, it should be TurboQuant. At a high-level, Google Research time kinda blew all our minds announcing a set of advanced algorithms that enable massive compression for LLMs and vector search engines. And part of what makes this so cool is that it’s all training free, and requires no fine tuning.

And…if you want to see some really neat things people are already doing with TurboQuant, here’s a few examples worth checking out:

Implemented Google's TurboQuant paper on Gemma 3 4B with a custom Triton kernel for fused quantized attention. (link)

Needle-in-a-haystack using Qwen3.5-35B-A3B across 8.5K, 32.7K, and 64.2K context lengths (link)

I just implemented Google’s TurboQuant for vLLM. My USB-charger-sized HP ZGX now fits 4,083,072 KV-cache tokens on GB10 (link)

—

AI trading agent based on Autoresearch

If you think Autoresearch is interesting, and want to check out one of the applications of it that I feel like could be going in a very fascinating directions, check out ATLAS, it’s a self-improving AI trading agent.

—

Sarah’s interview with Karpathy

Sarah interviewed Karpathy on her Podcast, No Priors, and it’s honestly one of the best interviews I’ve ever seen. I was writing on X that it was probably this, and Lex’s interview with Peter that are my too two so far this year. Every time I hear Karpathy talking about really anything, it makes me realize how lucky we are to have him, not just building things that many of us use every single day, but sharing so much. This is totally fascinating, you should listen to it at least twice.

—

Dynamic Worker Loader

Cloudflare has been doing some pretty neat stuff lately. And I’ll be honest, I’m an AWS guy that hasn’t used CF a ton, but I keep finding myself drawn to it, and I keep tinkering around, and then they keep announcing interesting stuff like this that makes me want to really dive in. Very cool solution for sandboxing, and a pretty slick alternative to containers. If this sounds interesting to you, the blog post I linked above has all the dets in it.

—

This was a big week for me, and I just wanted to share it with all of you, because it’s something I’ve been working on for a while now and am very proud of.

—

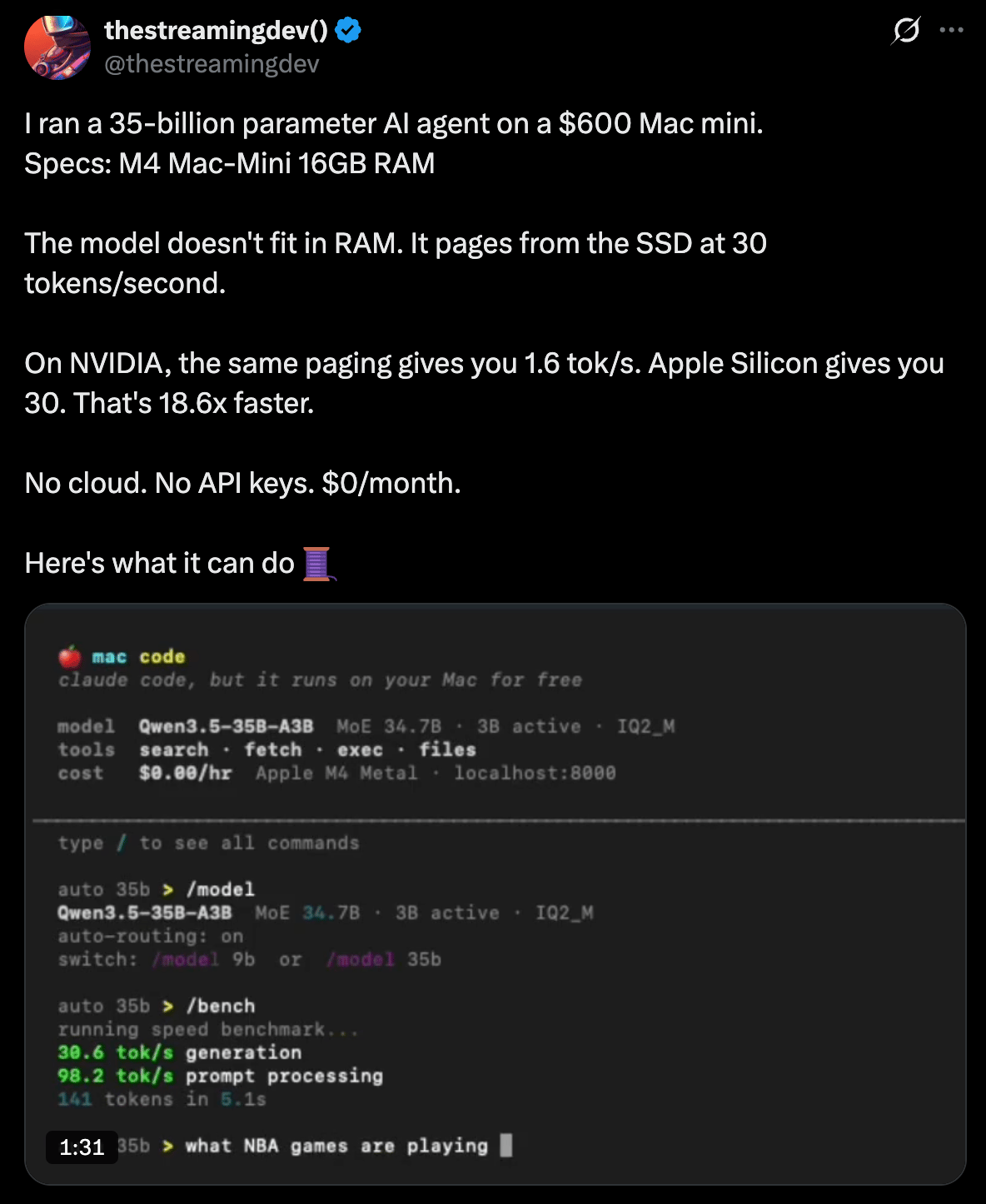

Running a 35B parameter AI agent on a $600 Mac Mini

This honestly sounds impossible to me, but if you click on the tweet above and walk through the thread, you’ll see - it’s real, he’s doing it. Not much more to say here except. If this is what we are doing on a $600 Mac Mini today, what can we do on a sub-$500 computer a year from now?

—

Dev Browser CLI

This is amazingly cool and I definitely wanted to make sure it was in this issue. At a high-level, it’s a super clean browser automation tool that lets AI agents control browsers with sandboxed Javascripts. Getting insane traction, I haven’t had the chance to play with it yet but very likely will be this weekend.

—

Expect - letting agents test code in real browsers

Aiden puts out some pretty interesting stuff, and this might be one of the most interesting things I’ve seen him release. Essentially, a single command can scan unstaged changes, put together a test plan, and then run it in a live browser. It feels like soooo many of us are doing this the old-fashioned way, maybe we don’t have to any more.

—

Limux, a lightweight GPU-accelerated terminal workspace manager for Linux.

I recently turned an old gaming PC of mine into a Linux box, and I’ve been really enjoying the entire process. This also means I’ve on a quest to find as much cool Linux sw as I can, and this popped up and I think it’s pretty neat, esp. for those of us that did turn an old gaming PC into a Linux box and want to maybe put the GPU to use.

Okay, that’s it for this week. No subscription fee, no sponsors, not written by AI, just kicking it old school and writing for the love of writing.

See you next week 🤘

Morgan